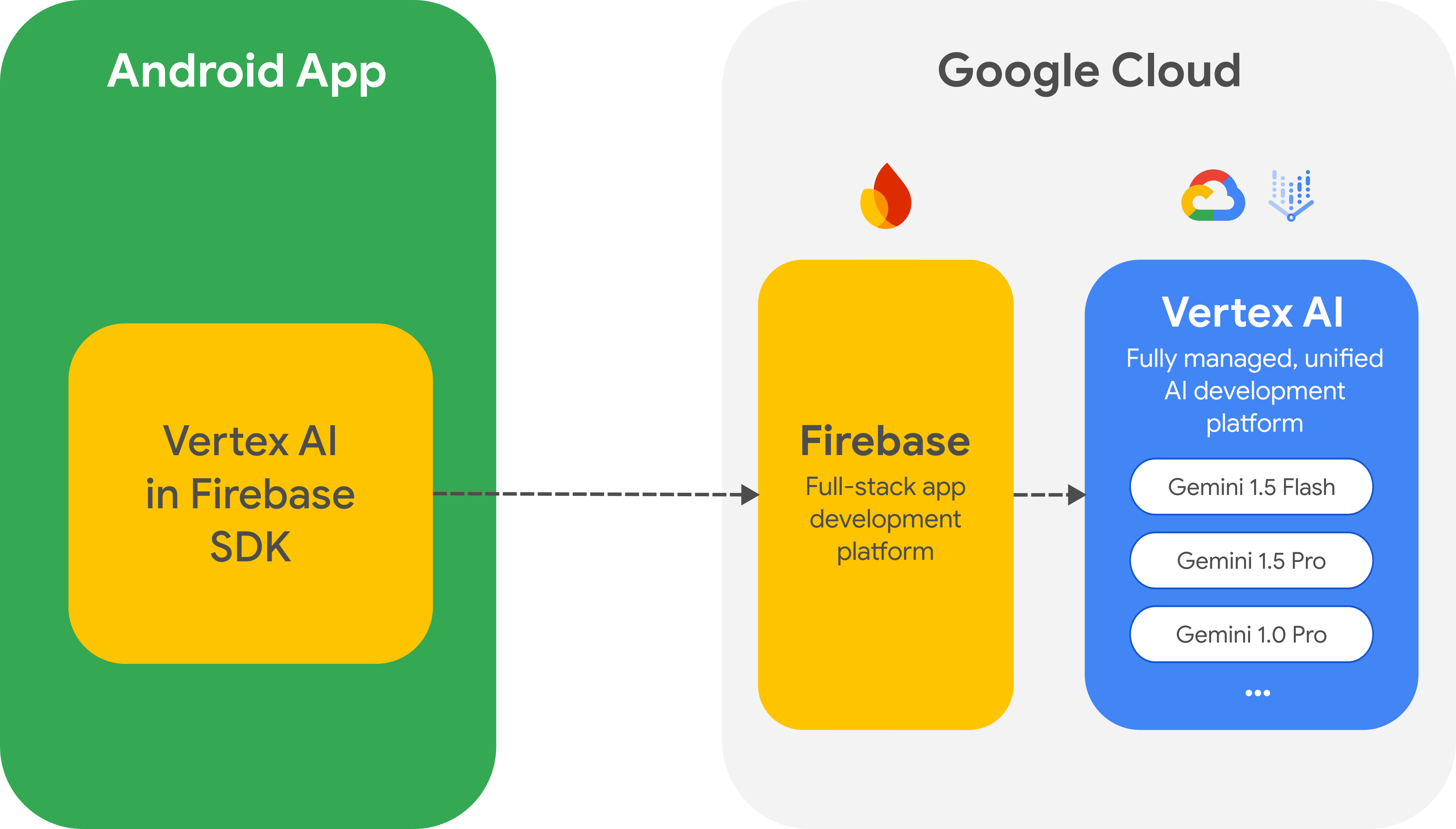

To access the Gemini API and the Gemini family of models directly from your app, we recommend using the Vertex AI in Firebase SDK for Android. This SDK is part of the larger Firebase platform that helps you build and run full-stack apps.

Migrate from the Google AI client SDK

The Vertex AI in Firebase SDK is similar to the Google AI client SDK, but the Vertex AI in Firebase SDK offers critical security options and other features for production use cases. For example, when using Vertex AI in Firebase you can also use the following:

Firebase App Check to protect the Gemini API from abuse by unauthorized clients.

Firebase Remote Config to dynamically set and change values for your app in the cloud (for example, model names) without the need to release a new version of your app.

Cloud Storage for Firebase to include large media files in your request to the Gemini API.

If you've already integrated the Google AI client SDK into your app, you can Migrate to Vertex AI in Firebase.

Getting started

Before you interact with the Gemini API directly from your app, you'll need to do a few things first, including getting familiar with prompting as well as setting up Firebase and your app to use the SDK.

Experiment with prompts

You can experiment with prompts in Vertex AI Studio. Vertex AI Studio is an IDE for prompt design and prototyping. It lets you upload files to test prompts with text and images and save a prompt to revisit it later.

Creating the right prompt for your use-case is more art than science, which makes experimentation critical. You can learn more about prompting in the Firebase documentation.

Set up a Firebase project and connect your app to Firebase

Once you're ready to call the Gemini API from your app, follow the instructions in the Vertex AI in Firebase getting started guide to set up Firebase and the SDK in your app. The getting started guide will help you do all the following tasks in this guide.

Set up a new or existing Firebase project, including using the pay-as-you-go Blaze pricing plan and enabling the required APIs.

Connect your app to Firebase, including registering your app and adding your Firebase config file (

google-services.json) to your app.

Add the Gradle dependency

Add the following Gradle dependency to your app module:

Kotlin

dependencies { ... implementation("com.google.firebase:firebase-vertexai:16.1.0") }

Java

dependencies { [...] implementation("com.google.firebase:firebase-vertexai:16.1.0") // Required to use `ListenableFuture` from Guava Android for one-shot generation implementation("com.google.guava:guava:31.0.1-android") // Required to use `Publisher` from Reactive Streams for streaming operations implementation("org.reactivestreams:reactive-streams:1.0.4") }

Initialize the Vertex AI service and the generative model

Start by instantiating a GenerativeModel and specifying the model name:

Kotlin

val generativeModel = Firebase.vertexAI.generativeModel("gemini-1.5-flash")

Java

GenerativeModel gm = FirebaseVertexAI.getInstance().generativeModel("gemini-1.5-flash");

In the Firebase documentation, you can learn more about the available models for use with Vertex AI in Firebase. You can also learn about configuring model parameters.

Interact with the Gemini API from your app

Now that you've set up Firebase and your app to use the SDK, you're ready to interact with the Gemini API from your app.

Generate text

To generate a text response, call generateContent() with your prompt.

Kotlin

// Note: `generateContent()` is a `suspend` function, which integrates well // with existing Kotlin code. scope.launch { val response = model.generateContent("Write a story about the green robot") }

Java

// In Java, create a `GenerativeModelFutures` from the `GenerativeModel`. // Note that `generateContent()` returns a `ListenableFuture`. Learn more: // https://developer.android.com/develop/background-work/background-tasks/asynchronous/listenablefuture GenerativeModelFutures model = GenerativeModelFutures.from(gm); Content prompt = new Content.Builder() .addText("Write a story about a green robot.") .build(); ListenableFuture<GenerateContentResponse> response = model.generateContent(prompt); Futures.addCallback(response, new FutureCallback<GenerateContentResponse>() { @Override public void onSuccess(GenerateContentResponse result) { String resultText = result.getText(); } @Override public void onFailure(Throwable t) { t.printStackTrace(); } }, executor);

Generate text from images and other media

You can also generate text from a prompt that includes text plus images or other

media. When you call generateContent(), you can pass the media as inline data

(as shown in the example below). Alternatively, you can

include large media files in a request by

using Cloud Storage for Firebase URLs.

Kotlin

scope.launch { val response = model.generateContent( content { image(bitmap) text("what is the object in the picture?") } ) }

Java

GenerativeModelFutures model = GenerativeModelFutures.from(gm); Bitmap bitmap = BitmapFactory.decodeResource(getResources(), R.drawable.sparky); Content prompt = new Content.Builder() .addImage(bitmap) .addText("What developer tool is this mascot from?") .build(); ListenableFuture<GenerateContentResponse> response = model.generateContent(prompt); Futures.addCallback(response, new FutureCallback<GenerateContentResponse>() { @Override public void onSuccess(GenerateContentResponse result) { String resultText = result.getText(); } @Override public void onFailure(Throwable t) { t.printStackTrace(); } }, executor);

Multi-turn chat

You can also support multi-turn conversations. Initialize a chat with the

startChat() function. You can optionally provide a message history. Then

call the sendMessage() function to send chat messages.

Kotlin

val chat = generativeModel.startChat( history = listOf( content(role = "user") { text("Hello, I have 2 dogs in my house.") }, content(role = "model") { text("Great to meet you. What would you like to know?") } ) ) scope.launch { val response = chat.sendMessage("How many paws are in my house?") }

Java

// (Optional) create message history Content.Builder userContentBuilder = new Content.Builder(); userContentBuilder.setRole("user"); userContentBuilder.addText("Hello, I have 2 dogs in my house."); Content userContent = userContentBuilder.build(); Content.Builder modelContentBuilder = new Content.Builder(); modelContentBuilder.setRole("model"); modelContentBuilder.addText("Great to meet you. What would you like to know?"); Content modelContent = userContentBuilder.build(); List<Content> history = Arrays.asList(userContent, modelContent); // Initialize the chat ChatFutures chat = model.startChat(history); // Create a new user message Content.Builder messageBuilder = new Content.Builder(); messageBuilder.setRole("user"); messageBuilder.addText("How many paws are in my house?"); Content message = messageBuilder.build(); Publisher<GenerateContentResponse> streamingResponse = chat.sendMessageStream(message); StringBuilder outputContent = new StringBuilder(); streamingResponse.subscribe(new Subscriber<GenerateContentResponse>() { @Override public void onNext(GenerateContentResponse generateContentResponse) { String chunk = generateContentResponse.getText(); outputContent.append(chunk); } @Override public void onComplete() { // ... } @Override public void onError(Throwable t) { t.printStackTrace(); } @Override public void onSubscribe(Subscription s) { s.request(Long.MAX_VALUE); } });

Stream the response

You can achieve faster interactions by not waiting for the entire result from

the model generation, and instead use streaming to handle partial results. Use

generateContentStream() to stream a response.

Kotlin

scope.launch { var outputContent = "" generativeModel.generateContentStream(inputContent) .collect { response -> outputContent += response.text } }

Java

// Note that in Java the method `generateContentStream()` returns a // Publisher from the Reactive Streams library. // https://www.reactive-streams.org/ GenerativeModelFutures model = GenerativeModelFutures.from(gm); // Provide a prompt that contains text Content prompt = new Content.Builder() .addText("Write a story about a green robot.") .build(); Publisher<GenerateContentResponse> streamingResponse = model.generateContentStream(prompt); StringBuilder outputContent = new StringBuilder(); streamingResponse.subscribe(new Subscriber<GenerateContentResponse>() { @Override public void onNext(GenerateContentResponse generateContentResponse) { String chunk = generateContentResponse.getText(); outputContent.append(chunk); } @Override public void onComplete() { // ... } @Override public void onError(Throwable t) { t.printStackTrace(); } @Override public void onSubscribe(Subscription s) { s.request(Long.MAX_VALUE); } });

Next steps

- Review the Vertex AI in Firebase sample app on GitHub.

- Start thinking about preparing for production, including setting up Firebase App Check to protect the Gemini API from abuse by unauthorized clients.

- Learn more about Vertex AI in Firebase in the Firebase documentation.