在阅读 Privacy Sandbox on Android 文档时,请使用开发者预览版或 Beta 版按钮选择您所使用的程序版本,因为说明可能会有所不同。

Attribution Reporting API 的设计宗旨是在不依赖跨多方用户标识符的情况下,满足跨应用和网站进行归因和转化衡量的关键应用场景。与目前的常见设计相比,Attribution Reporting API 实现人员应当考虑一些总体层面的重要注意事项:

- 事件级报告包含的转化数据保真度较低。转化价值的数量少些也无妨。

- 可汇总报告包含的转化数据保真度较高。您的解决方案应根据业务要求和 128 位的限制来设计汇总键。

- 解决方案的数据模型和处理应考虑到可用触发器的速率限制、发送触发器事件的延时情况以及 API 应用的噪声。

为帮助您制定集成计划,本指南进行了全面的介绍,其中可能包括 Privacy Sandbox on Android 开发者预览版当前阶段尚未实现的功能。对于此类情况,我们提供了时间表指导。

在本页面中,我们使用“来源”表示点击或查看,使用“触发器”表示转化。

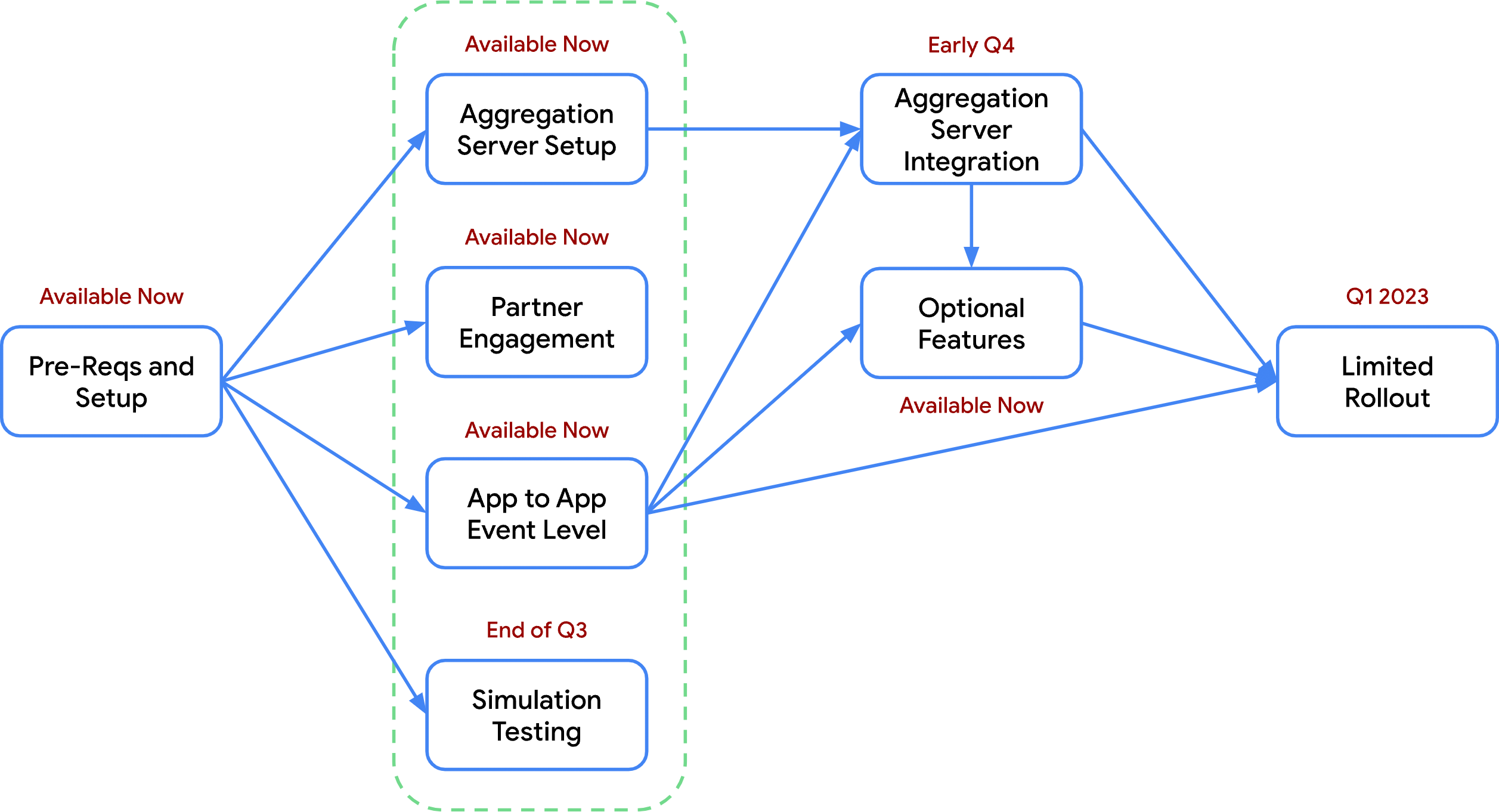

下图显示了在归因集成方面可供选择的不同工作流程。列于同一列中的几部分(以绿色圈出)可以同时进行;例如,合作伙伴参与可与应用到应用的事件级归因同时完成。

图 1:归因集成工作流程。

前提条件和设置

请完成此部分的步骤,以增进您对 Attribution Reporting API 的了解。这些步骤将帮助您在广告技术生态系统中使用该 API 时得到有意义的结果。

熟悉该 API

- 阅读设计方案,熟悉 Attribution Reporting API 及其功能。

- 阅读开发者指南,了解如何引入您的应用场景所需要的代码和 API 调用。

- 提交您对本文档的反馈,尤其是与待解决问题相关的反馈。

- 订阅有关 Attribution Reporting API 的最新动态。这可以帮助您及时了解未来版本中引入的新功能。

设置并测试示例应用

- 做好开始集成的准备后,在 Android Studio 中设置最新的开发者预览版。

- 设置用于注册事件和发送报告的模拟服务器端点。 您可以将我们提供的模拟与网上提供的工具搭配使用。

- 下载并运行示例应用中的代码,熟悉如何注册来源和触发器。

- 设置发送报告的时间范围。该 API 支持以下时间范围:2 天、7 天或自定义时间段(2 到 30 天)。

- 通过运行和使用示例应用来注册来源和触发器并且设置的时间段已过之后,验证是否已收到事件级报告和加密的可汇总报告。如果需要对报告进行调试,可以通过强制运行报告作业更快地生成报告。

- 查看应用到应用归因的结果。确认这些结果中的数据在最终接触归因和安装后归因这两种情况下都符合预期。

- 了解客户端 API 和服务器如何协同工作后,以示例应用为范例来指导您自己的集成。设置您自己的生产服务器,并在您的应用中添加事件注册调用。

集成前准备

向 Privacy Sandbox on Android 注册您的组织。此注册旨在防止出现不必要的广告技术平台重复,否则可能导致获取的用户活动相关信息超过所需。

合作伙伴参与

广告技术合作伙伴 (MMP/SSP/DSP) 开发的归因解决方案往往是集成解决方案。此部分的步骤可以帮助您做好准备,与广告技术合作伙伴成功合作。

- 安排一次与顶级衡量合作伙伴的会谈,讨论 Attribution Reporting API 的测试和采用。衡量合作伙伴包括广告技术广告联盟、SSP、DSP、广告主或您目前合作或想要合作的任何其他合作伙伴。

- 与衡量合作伙伴协作制定从初始测试到采用的集成时间表。

- 与衡量合作伙伴理清你们各方分别负责归因设计中的哪些方面。

- 在衡量合作伙伴之间建立沟通渠道,用于协调时间表和端到端测试。

- 设计衡量合作伙伴之间的概要数据流。主要考虑因素包括:

- 衡量合作伙伴如何使用 Attribution Reporting API 注册归因来源?

- 广告技术广告联盟如何使用 Attribution Reporting API 注册触发器?

- 每个广告技术平台如何验证 API 请求并返回响应以完成来源和触发器注册?

- 除 Attribution Reporting API 生成的报告以外,合作伙伴之间是否还需要共享任何其他报告?

- 合作伙伴之间是否还需要任何其他集成点或达成其他方面的一致?例如,您与合作伙伴是否需要处理重复转化或就汇总键达成一致?

- 如果适用应用到网站归因的情况,应安排一次与网站衡量合作伙伴的会谈,讨论 Attribution Reporting API 的设计、测试和采用事宜。与网站合作伙伴开始会谈时,请参阅上一步中列出的问题。

为应用到应用的事件级归因设计原型

此部分可帮助您在应用或 SDK 中使用事件级报告设置基本的应用到应用归因。您必须先完成此部分,然后才能开始对汇总服务器归因进行原型设计。

- 为事件记录设置收集服务器。若要完成此设置,您可以使用提供的规范生成模拟服务器,也可以使用[示例服务器代码][39]{:.external} 自行设置服务器。

- 在 SDK 或应用中添加在展示广告时[用于注册来源的事件调用][22]。

- 重要考虑因素包括:

- 确保来源事件 ID 可用并可正确传递给来源注册 API 调用。

- 确保您还可以传入一个“InputEvent”来注册点击来源。

- 确定如何为不同类型的事件[配置来源优先级][23]。例如,将较高的优先级分配给被视为价值更高的事件,例如点击优先于查看。

- 对测试而言,到期时间完全可以使用默认值。当然,您也可以[配置不同的到期时间][24]。

- 对测试而言,过滤器和归因回溯期可以保留默认设置。

- 可选考虑因素包括:

- 设计汇总键(如果已准备好使用汇总键)。

- 确定您与其他衡量合作伙伴的合作方式之后,考虑您的重定向策略。

- 重要考虑因素包括:

- 在 SDK 或应用中添加[注册触发器事件][25],用于记录转化事件。

- 重要考虑因素包括:

- 在考虑[返回的保真度有限][26] 的前提下定义触发器数据:如何减少广告主所需的转化类型数量,以满足 3 个位供点击使用和 1 个位供查看使用的要求?

- [对事件报告中的可用触发器的限制][3]:您打算如何减少事件报告中可以接收的每个来源的总转化次数?

- 可选考虑因素包括:

- 在执行准确率测试之前,不创建重复信息删除键。

- 在[模拟测试支持][7] 准备就绪之前,不创建汇总键和值。

- 在确定您与其他衡量合作伙伴的合作方式之前,不考虑重定向策略。

- 对测试而言,触发器优先级并非必不可少。

- 在初始测试中,过滤器可能会被忽略。

- 重要考虑因素包括:

- 测试是否会为广告生成来源事件,以及触发器是否会导致创建事件报告。

模拟测试

此部分将引导您测试将当前转化转换为事件报告和可汇总报告可能对报告和优化系统产生的影响。这样一来,您就可以在完成集成前开始影响测试。

测试通过模拟以下过程来完成:基于您已有的历史转化记录生成事件报告和可汇总报告,然后从模拟的汇总服务器获取汇总结果。您可以将这些结果与历史转化数据进行比较,看看报告的准确率会如何变化。

您可以基于这些报告训练优化模型(例如预测转化率的计算),将这些模型的准确率与基于当前数据的模型进行比较。您还可以借此机会尝试不同的汇总键结构及其对结果的影响。

- 在本地计算机上设置 Measurement Simulation 库。

- 阅读规范,了解必须如何设置转化数据格式,才能与模拟的报告生成器兼容。

- 根据业务要求设计汇总键。

- 重要考虑因素包括:

- 考虑客户或合作伙伴需要汇总的关键维度,并重点评估这些维度。

- 确定满足您的要求所需的最小汇总维度数和基数。

- 确保来源端和触发器端的键部分不超过 128 位。

- 如果您的解决方案需要将每个触发器事件归因于多个值,请务必根据最大归因预算(即 L1)缩放这些值。这有助于最大限度降低噪声的影响。

- 此处的示例详细介绍了如何设置一个键用于收集广告系列级汇总转化次数,再设置一个键用于收集地理位置级汇总购买价值。

- 重要考虑因素包括:

- 运行报告生成器以创建事件报告和可汇总报告。

- 通过模拟的汇总服务器运行可汇总报告,以获取摘要报告。

- 执行实用程序实验:

- 比较事件级报告和摘要报告中的总转化次数与历史转化数据,以确定转化报告的准确率。为了得到最准确的结果,请广泛选取具有代表性的广告客户群来运行测试报告并进行比较。

- 基于事件级报告数据(可能还要加上摘要报告数据)重新训练您的模型。将准确率与基于历史训练数据构建的模型进行比较。

- 尝试不同的批处理策略,看看对结果有何影响。

- 重要考虑因素包括:

- 摘要报告的及时性(用于调整出价)。

- 设备上的可归因事件的平均频率。例如,基于历史购买事件数据的流失用户回归。

- 噪声等级。批次越多意味着汇总越少,汇总越少意味着应用的噪声越多。

对汇总服务器归因进行原型设计:设置

以下步骤可确保您能够收到来源和触发器事件的可汇总报告。

- 设置汇总服务器:

- 设置您的 AWS 账号。

- 与您的协调员一起注册汇总服务。

- 基于提供的二进制文件在 AWS 上设置汇总服务器。

- 根据业务要求设计汇总键。如果您已在“应用到应用事件级”部分完成此任务,可跳过此步骤。

- 为可汇总报告设置收集服务器。如果您已在“应用到应用事件级”部分创建了一台收集服务器,可再次使用该服务器。

对汇总服务器归因进行原型设计:集成

若要继续往下学习,您必须已完成对汇总服务器归因进行原型设计:设置部分或对应用到应用事件级归因进行原型设计部分。

- 将汇总键数据添加到来源和触发器事件。这可能需要将有关广告事件的更多数据(例如广告系列 ID)传入 SDK 或应用,以将其包含在汇总键中。

- 从您已使用汇总键数据注册的来源和触发器事件收集应用到应用可汇总报告。

- 通过汇总服务器运行这些可汇总报告时,测试不同的批处理策略,看看对结果有何影响。

使用可选功能迭代设计

以下是可纳入衡量解决方案中的其他功能。

使用 Debug API 生成调试密钥(强烈建议)

- 设置调试密钥后,您便可以收到未经更改的来源或触发器事件报告,以及由 Attribution Reporting API 生成的报告。您可以使用调试密钥来比较报告,并在集成期间查找 bug。

自定义归因行为

- 安装后触发器的归因

- 如果即使最近发生了其他符合条件的归因来源也需要将安装后触发器归因于促成安装的同一归因来源,就可以使用此功能。

- 例如,用户点击了一个广告,该广告促成了安装。安装后,用户点击了另一个广告并进行了购买。在这种情况下,广告技术公司可能希望将购买交易归因于首次点击而非再互动点击。

- 使用过滤器微调事件级报告中的数据

- 您可以设置转化过滤器,以忽略所选触发器并将其从事件报告中排除。由于每个归因来源的触发器数量有限制,因此过滤器可以让事件报告中只包含提供最实用信息的触发器。

- 您还可以使用过滤器选择性地过滤掉某些触发器,有效地忽略它们。例如,如果您有一个以促成应用安装为目标的广告系列,您可能就需要过滤掉安装后触发器,以免将其归因于该广告系列的来源。

- 您还可以使用过滤器根据来源数据自定义触发器数据。例如,来源可以指定

"product" : ["1234"],其中 product 是过滤器键,1234 是值。过滤器键为“product”而值非“1234”的任何触发器都将被忽略。

- 自定义来源和触发器优先级

- 如果多个归因来源可与一个触发器相关联,或者多个触发器可归因于一个来源,您可以使用有符号的 64 位整数使某些来源/触发器归因优先于其他归因。

与 MMP 和其他方合作

- 针对来源和触发器事件重定向到其他第三方

- 您可以设置重定向网址,以允许多个广告技术平台注册一个请求。这可用于在归因中实现跨广告联盟的重复信息删除。

- 重复信息删除键

- 当广告主使用多个广告技术平台注册同一个触发器事件时,您可以使用重复信息删除键来消除这些重复报告引发的歧义。如果未提供重复信息删除键,重复的触发器可能会作为不同的触发器回报给每个广告技术平台。

使用跨平台衡量

- 跨应用和跨网站归因(将于第 4 季度末推出)

- 满足用户在某个应用中看到广告、然后在移动浏览器或应用浏览器中完成转化的应用场景,反之亦然。

为您推荐

- 注意:未启用 JavaScript 时,系统会显示链接文字

- 归因报告

- 归因报告:跨应用和跨网站衡量