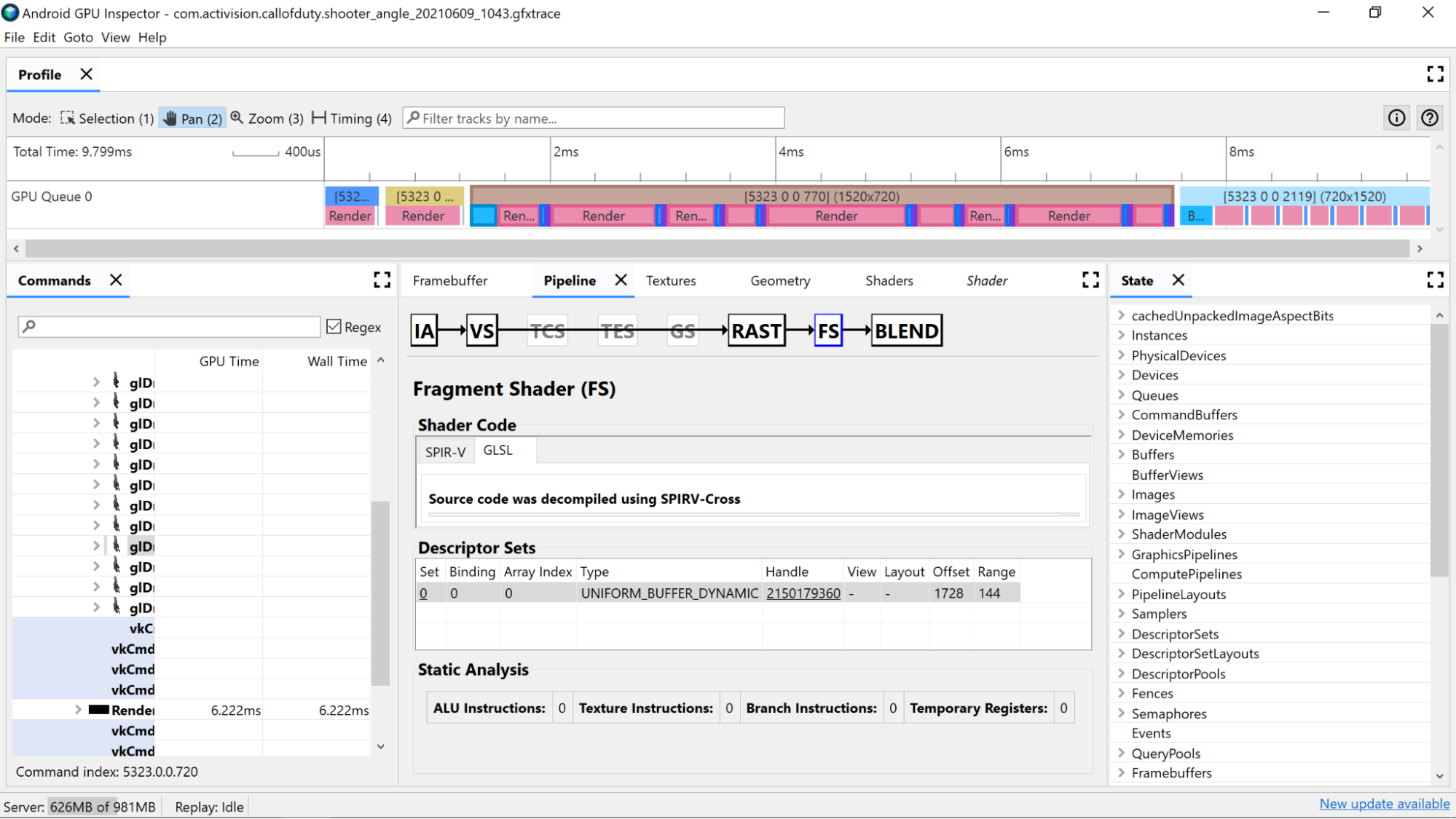

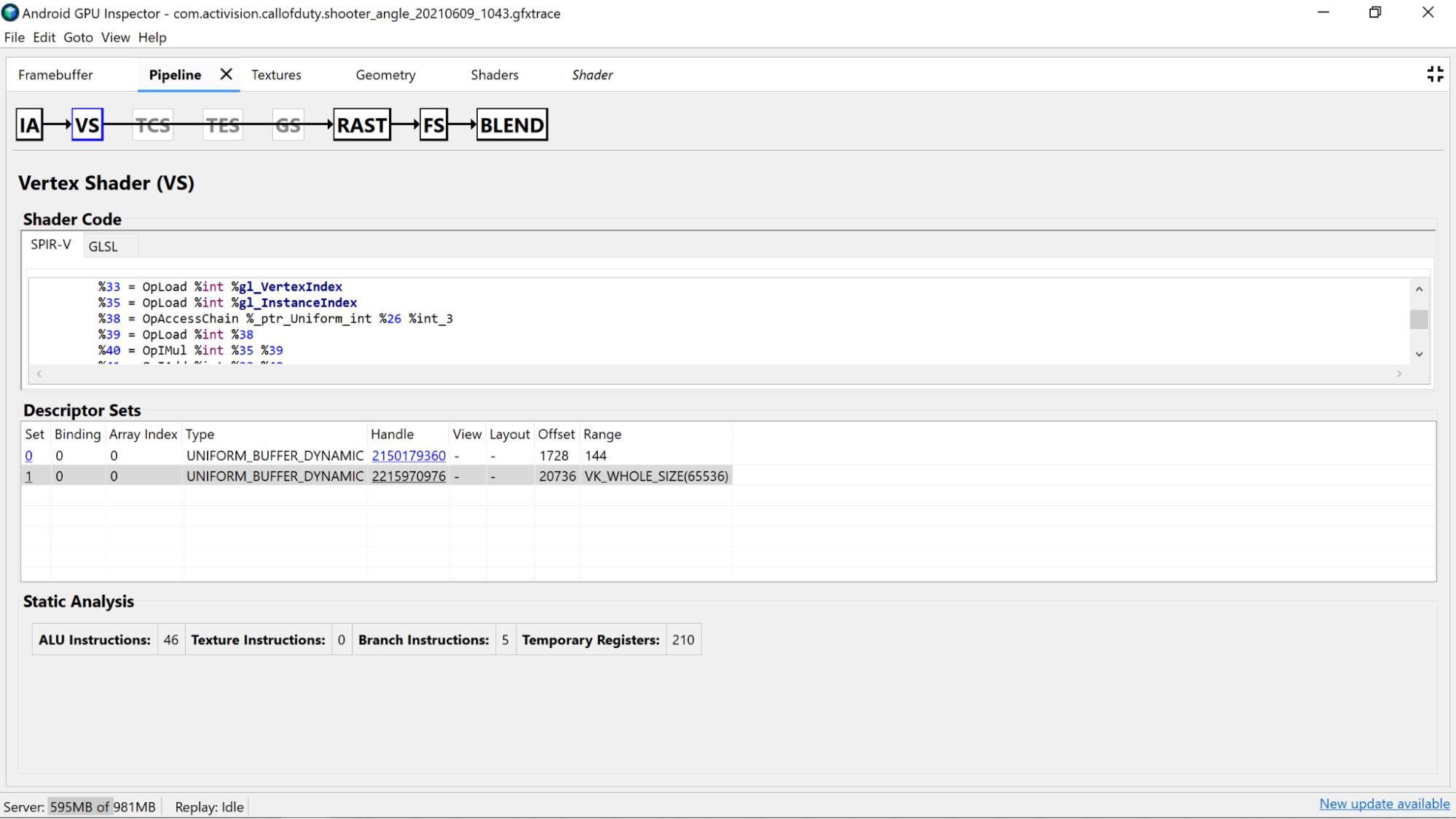

借助 AGI 帧性能分析器,您可以调查着色器,方法是从我们的某个渲染通道中选择一个绘制调用,然后浏览 Pipeline 窗格的 Vertex Shader 部分或 Fragment Shader 部分。

在这里,您可以找到来自着色器代码的静态分析的实用统计信息,以及 GLSL 已编译到的标准可移植中间表示法 (SPIR-V) 汇编结果。还有一个标签页,用于查看使用 SPIR-V Cross 进行反编译的原始 GLSL(包含编译器为变量、函数等生成的名称)的表示形式,以便为 SPIR-V 提供额外的上下文。

静态分析

使用静态分析计数器查看着色器中的低层级操作。

ALU 指令:此计数显示了在着色器中执行的 ALU 操作(加法、乘法、除法等)的数量,并且可以很好地反映着色器的复杂程度。请尽量减小此值。

重构常见计算或简化在着色器中完成的计算有助于减少所需的指令数量。

纹理指令:此计数显示纹理采样在着色器中的次数。

- 纹理采样可能成本高昂,具体取决于从采样中的纹理类型,因此将着色器代码与“描述符集”部分中找到的绑定纹理进行交叉引用可以提供有关所用纹理类型的更多信息。

- 在对纹理进行采样时避免随机访问,因为此行为不适用于纹理缓存。

分支指令:此计数显示了着色器中的分支操作数量。“最大限度地减少分支”是并行化处理器(如 GPU)上的理想选择,甚至可以帮助编译器找到其他优化措施:

- 使用

min、max和clamp等函数可避免基于数值进行分支。 - 测试分支的计算开销。由于在许多架构中都会执行分支的两个路径,因此在许多情况下始终进行计算比使用分支跳过计算更快。

- 使用

临时寄存器:这些是快速核心寄存器,用于保存 GPU 计算所需的中间操作结果。在 GPU 必须改用其他非核心内存来存储中间值之前,可用于计算的寄存器数量存在限制,这会降低整体性能。(此限制因 GPU 型号而异。)

如果着色器编译器执行展开循环等操作,则使用的临时寄存器数量可能会高于预期,因此最好将此值与 SPIR-V 或反编译的 GLSL 进行交叉引用,看看代码的用途。

着色器代码分析

检查反编译的着色器代码本身,以确定是否有任何潜在的改进机会。

- 精确率:着色器变量的精度会影响应用的 GPU 性能。

- 请尽可能尝试对变量使用

mediump精度修饰符,因为中精度 (mediump) 16 位变量通常比全精度 (highp) 32 位变量更快、更省电。 - 如果您在着色器中没有看到任何精度限定符,但对于变量声明,或者着色器顶部带有

precision precision-qualifier type,则默认为全精度 (highp)。请务必同时查看变量声明。 - 出于上述相同原因,同样首选使用

mediump进行顶点着色器输出,同时还有助于减少内存带宽,以及减少执行插值所需的临时寄存器用量。

- 请尽可能尝试对变量使用

- 统一缓冲区:尽量使统一缓冲区的大小尽可能小(同时保持对齐规则)。这有助于使计算与缓存更加兼容,并有望将统一数据提升为更快的核心寄存器。

移除未使用的顶点着色器输出:如果您发现 fragment 着色器中未使用的顶点着色器输出,请将其从着色器中移除,以释放内存带宽和临时寄存器。

将计算从 fragment 着色器移至顶点着色器:如果 fragment 着色器代码执行的计算与被着色的 fragment 特有的状态无关(或者可以正确插值),则将其移至顶点着色器是理想的方式。原因在于,在大多数应用中,顶点着色器的运行频率要比 fragment 着色器少很多。